For performance marketers, the transition from static image carousels to video-first strategies has been less of a choice and more of a survival tactic. Platforms like TikTok, Meta, and YouTube Shorts favor high-motion assets, yet the cost of traditional video production remains a barrier to testing at scale. Generative AI promised to collapse these costs, but early adopters quickly hit a wall: coherence. It is relatively easy to generate a beautiful, static scene; it is significantly harder to move a camera through that scene without the environment melting into a surrealist nightmare.

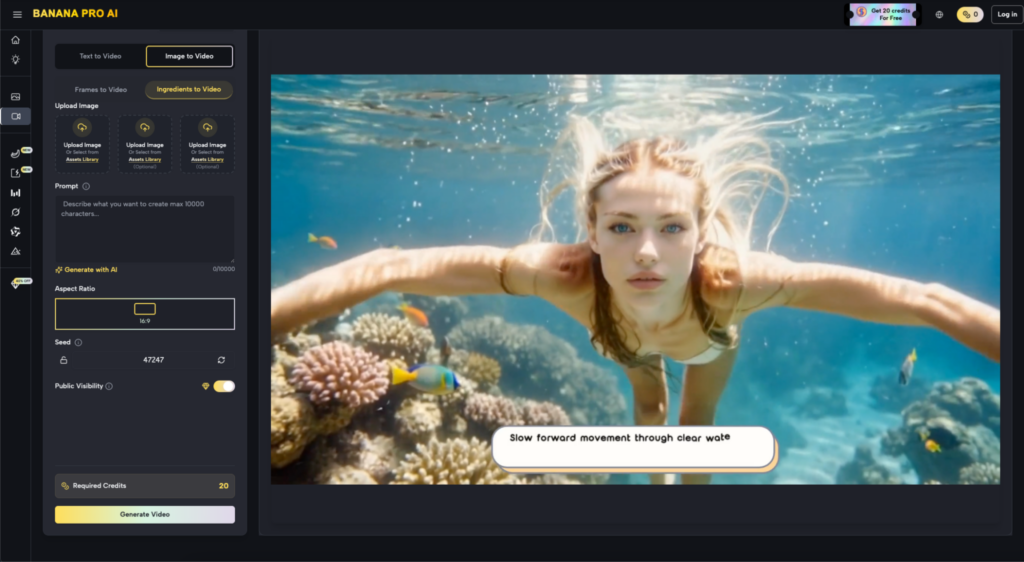

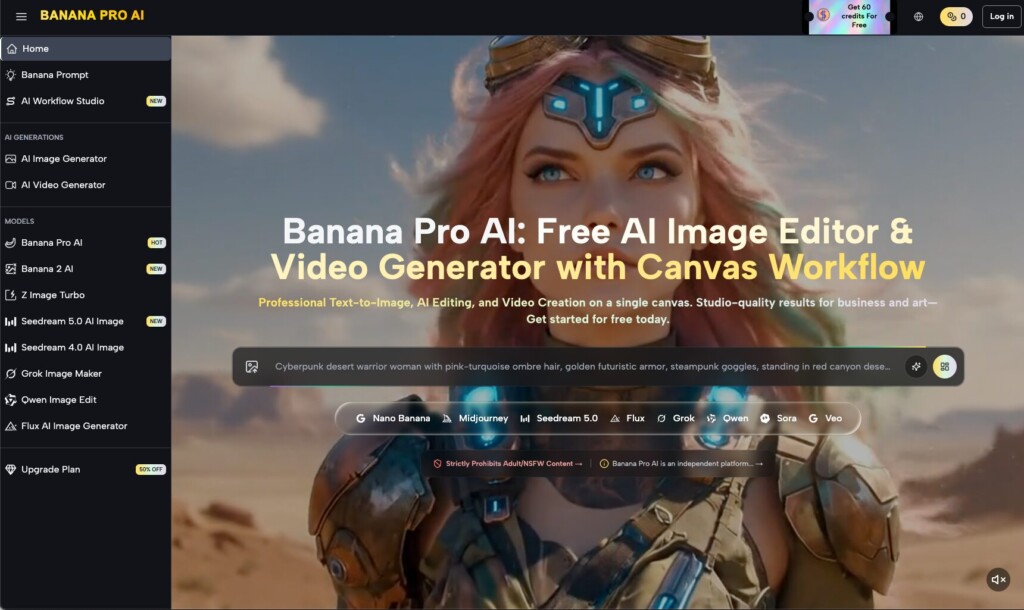

In the current landscape of creative operations, the bottleneck has shifted from “making an image” to “controlling the motion.” Professional operators are no longer looking for a “generate video” button that acts as a black box. Instead, they require systems that allow for precise shaping of camera movement, subject velocity, and temporal stability. This is where the workflow surrounding Banana AI has begun to differentiate itself, moving away from the chaotic “slot machine” approach toward a more deterministic production pipeline.

The Physics of Attention in Performance Marketing

Motion is not merely an aesthetic choice in digital advertising; it is a signal to the algorithm. A sudden zoom-in or a panning shot across a product creates a visual hook that interrupts the scroll. However, when using AI to generate these hooks, the primary challenge is maintaining the integrity of the subject. If a product’s label changes font mid-pan, or if a person’s limbs morph during a walking cycle, the “uncanny valley” effect triggers a subconscious distrust in the viewer, likely tanking the conversion rate.

To solve for this, operators are increasingly leveraging Nano Banana Pro to bridge the gap between prompt-based spontaneity and cinematic control. The goal is to move beyond the default “slow-drift” motion that plagues most AI video generators. While a slow-motion pan might look pretty, it rarely drives the urgency required for high-performance creative. We need to dictate the physics—how fast does the camera move? Does the subject stay centered? Does the background blur realistically with the motion?

Structuring the Movement: Camera vs. Subject

Effective motion control requires a bifurcated approach. You have to think like a director of photography (DP) and a choreographer simultaneously. In a tool-savvy workflow, the operator must first define the camera’s relationship to the world before worrying about what the subject is doing.

When working within the Banana Pro ecosystem, the initial frame is the anchor. If you start with a low-quality or structurally weak image, the video will amplify those flaws exponentially. This is why many teams start with an AI Image Editor to lock in the lighting, geometry, and brand assets before a single frame of motion is rendered. Once the foundation is solid, the operator can apply motion parameters that dictate the “swing” of the camera.

The Challenge of Constant Perspective

One of the most persistent limitations in generative video is the loss of 3D consistency. As the camera moves around an object, the AI must “guess” what the back of that object looks like. Currently, no model—including Nano Banana—is perfect at this. There is an inherent uncertainty when attempting a full 360-degree orbit around a complex subject. Operators should reset their expectations here: instead of aiming for a single, long take, it is often more effective to generate three or four high-coherence 2-second clips and stitch them together with traditional editing software. This “modular motion” approach prevents the accumulation of temporal artifacts.

Directing Velocity and Pacing

Pacing in AI video is often dictated by the “motion bucket” or a numerical scale provided by the interface. However, a high motion score doesn’t always mean a better video; often, it just means more noise. To achieve controlled velocity, the operator must align the prompt with the motion settings.

For instance, if your prompt describes a “dynamic, high-speed chase” but your motion setting is low, the AI will struggle to reconcile the two, leading to flickering or strange warping. Conversely, applying high motion to a “calm, serene landscape” will often result in the ground shifting like water. Finding the “golden ratio” between the text intent and the mechanical motion slider is the primary skill of a modern creative operator.

Managing Subject Interaction

Subject motion—such as a person waving, a liquid pouring, or a car driving—introduces the highest risk of incoherence. In the context of Nano Banana, the best results often come from separating the subject’s movement from the camera’s movement. If the camera is static, the AI can dedicate more of its “computational focus” to the fluidity of the subject. If both are moving rapidly, the background is likely to “bleed” into the foreground. For performance ads, a static camera with a dynamic subject (like a product being unboxed or demonstrated) usually yields the highest quality-to-render ratio.

The Workflow: From Static to Kinetic

A commercial-grade workflow rarely starts with a text-to-video prompt. It’s too unpredictable for brand-sensitive work. Instead, the process generally follows this trajectory:

- Asset Generation: Using a high-fidelity model to create the “hero” image. This ensures that the product looks correct and the lighting matches the brand guide.

- Refinement: Utilizing an AI Image Editor to clean up any artifacts, adjust the aspect ratio for social platforms, and ensure the focal point is clear.

- Motion Mapping: Taking that refined image into the video generator. Here, the operator chooses between the standard Nano Banana or the more robust Pro version depending on the level of detail required.

- Iterative Looping: Generative video is rarely a one-shot process. An operator might generate five variations of the same 3-second motion path, selecting only the one where the subject’s face or product label remains stable.

Navigating Current Technological Limitations

It is important to maintain a level of skepticism regarding “zero-shot” video production. While the tools are rapidly advancing, there are still areas where the tech falters. High-speed human movement, particularly where limbs cross over one another (like running or dancing), remains a significant hurdle. If your creative requires perfect anatomical accuracy in high-motion scenarios, you may find that the AI still produces “melting” or “ghosting” effects.

Additionally, the physics of fluids and complex reflections can be hit-or-miss. A shot of a glass of soda being poured might look photorealistic in one generation and like a shimmering gel in the next. Recognizing these moments of uncertainty allows an operator to pivot their creative strategy—perhaps focusing on a close-up of the condensation on the glass instead of the pour itself—to maintain the illusion of reality.

The Commercial Value of Iteration

The real strength of systems like Banana Pro is not just the ability to make one video, but the ability to iterate at the speed of data. If a performance marketer sees that “downward-tilting” camera shots are outperforming “horizontal-pans” in A/B tests, they can quickly re-generate their entire library of assets with that specific motion profile.

This shift from “artist” to “operator” is the defining trend of 2024. The creative team’s value is no longer in their ability to use a camera, but in their ability to manage the outputs of a system like Banana AI to ensure brand consistency and emotional resonance. By mastering motion coherence, teams can finally stop treating AI video as a novelty and start treating it as a reliable component of their performance pipeline.

Practical Judgment in Motion Selection

When selecting which motion paths to use for an ad campaign, consider the “eye-path” of the viewer. AI-generated motion can sometimes be “drunken”—it wanders aimlessly without a clear point of interest. A professional operator uses tools like Nano Banana Pro to force a more purposeful movement. A slight “push-in” (dolly zoom) on a product creates a sense of importance, while a “slow-pan” suggests luxury and scale.

Avoid the temptation to over-animate. In the world of performance creative, the most effective video is often the one where the motion is so smooth and natural that the viewer doesn’t even realize it was generated by an AI. The goal is total invisibility of the tool. When the motion is coherent, the viewer focuses on the message and the product, rather than the glitches in the matrix.

The path to scaling video creative is not through more hardware or bigger film crews, but through the disciplined use of generative tools that prioritize stability over spectacle. By focusing on controlled velocity and temporal coherence, marketers can finally unlock the true potential of the AI-driven creative cycle.